The 12 Best Embedding Model for RAG Systems in 2026

Discover the best embedding model for RAG with our 2026 guide. We rank top models on performance, cost, and use case for better retrieval.

The quality of your Retrieval-Augmented Generation (RAG) system hinges on one critical component: the embedding model. This model is the engine that translates your documents into a searchable vector space, directly impacting retrieval accuracy and, ultimately, the quality of your AI-generated answers. A suboptimal choice leads to irrelevant context, hallucinations, and poor responses, while the right model unlocks precise, context-aware results that make your application shine. Finding the best embedding model for RAG isn't about picking the one with the highest benchmark score; it's about matching the model's performance, cost, and operational footprint to your specific needs.

This guide provides a comprehensive, actionable breakdown of the top platforms and models for 2024. We move beyond marketing copy to offer a direct comparison of leading options from providers like OpenAI, Cohere, Google, and Voyage AI, alongside powerful open-weight alternatives available through Hugging Face and other platforms. You will find a detailed analysis of each option, covering:

- Performance Trade-offs: Balancing retrieval accuracy, indexing latency, and vector dimensionality.

- Cost Implications: Understanding the impact of token-based pricing versus self-hosting infrastructure on your budget.

- Actionable Use Cases: Pinpointing the best model for domain-specific tasks, multilingual needs, and balancing speed with precision.

- Implementation Tips: Practical advice on how to leverage each model's strengths, from batching APIs to choosing the right distance metric, to directly improve retrieval performance.

We'll cut through the noise to help you select, test, and deploy the ideal embedding model for your RAG pipeline. Each entry includes direct links and practical insights to help you move from raw documents to a production-ready retrieval system with confidence.

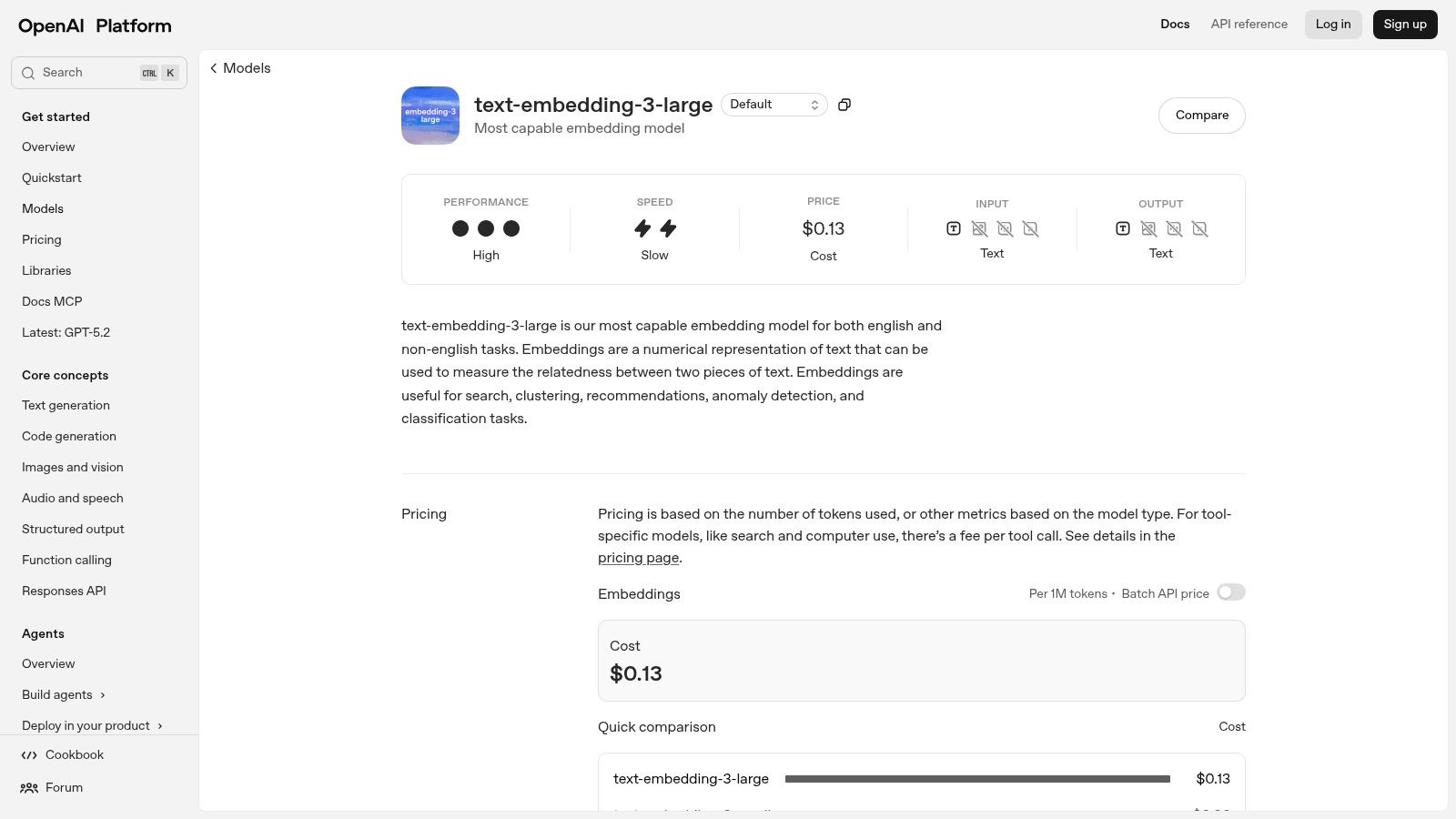

1. OpenAI

OpenAI offers a suite of production-grade embedding models that are a go-to choice for developers seeking a balance of high performance, scalability, and ease of implementation. Their latest models, text-embedding-3-large and text-embedding-3-small, are specifically optimized for retrieval augmented generation (RAG) and serve as a reliable foundation for building robust semantic search systems. Their primary advantage lies in their seamless integration into the broader AI ecosystem; nearly every major RAG framework and vector database provides native, well-documented support for OpenAI's APIs.

This makes OpenAI an excellent starting point for teams prioritizing development speed and stability. You can quickly stand up a proof-of-concept and scale it to handle millions of documents without managing any infrastructure. While the closed-source nature means you cannot self-host, the platform provides a clear compliance and security story for enterprises.

Actionable Retrieval Insights

For optimal retrieval, leverage the batch API endpoint for bulk indexing to reduce both cost and network latency. The text-embedding-3 models support shortening the output dimensions, which can decrease vector database costs and potentially improve retrieval speed. Test different dimensions (e.g., 256, 1024) on your data to find the best balance of performance and cost. Since performance is tied to your overall RAG pipeline, always pair these powerful embeddings with a coherent chunking strategy to ensure the context retrieved is meaningful.

Key Features:

- Two Model Sizes:

text-embedding-3-largefor maximum accuracy andtext-embedding-3-smallfor a balance of cost and performance. - Adjustable Dimensions: Control vector size to optimize for storage costs and retrieval speed.

- Scalable API: Built for production workloads with robust quotas and batch processing capabilities.

- Excellent Ecosystem Support: Widely supported in libraries like LangChain and LlamaIndex.

2. Google Vertex AI

For teams already invested in the Google Cloud ecosystem, Google Vertex AI provides a suite of managed embedding models that offer tight, first-party integration and enterprise-grade controls. It serves multiple model families, including Gemini, Google Text Embeddings (GTE), and E5, allowing developers to select the best embedding model for RAG based on their specific needs for multilingual support or performance. The primary benefit is its seamless connection with other GCP services like Vertex AI Vector Search and Cloud Spanner, simplifying the infrastructure management for RAG systems.

This makes Google's offering a strong contender for organizations prioritizing security, compliance, and unified billing within their existing cloud environment. It eliminates the operational overhead of managing separate services, providing a single platform for both embedding generation and vector search. While the platform offers excellent documentation and Colab notebooks to get started, teams should be aware that model availability can vary by region as new versions are rolled out.

Actionable Retrieval Insights

The Vertex AI API supports batch requests up to 250 inputs, which is crucial for efficient bulk indexing. A key action is to project costs based on characters, not tokens, which can significantly impact budgeting for verbose document sets. To boost retrieval performance, use the task_type parameter in the API call (e.g., RETRIEVAL_DOCUMENT for indexing, RETRIEVAL_QUERY for searching) to specialize the embeddings for their specific role. This simple step can yield noticeable improvements in search relevance. Also, ensure your AI document processing workflow is optimized before embedding. For a seamless workflow, learn how to deploy apps from Google AI Studio, which can integrate directly with Vertex AI services.

Key Features:

- Multiple Model Choices: Access to various models including the powerful Gemini and multilingual E5 series.

- GCP Integration: Native connection to Vertex AI Vector Search for a fully managed retrieval pipeline.

- Task-Specific Embeddings: Optimize vectors for either query or document roles to improve retrieval accuracy.

- Flexible API: Supports batch and online requests for different indexing and retrieval scenarios.

3. AWS Bedrock

For organizations deeply integrated into the Amazon Web Services ecosystem, AWS Bedrock provides a compelling, fully managed solution for deploying embedding models. It serves as a unified API gateway to various foundational models, including Amazon's own Titan Embeddings and popular third-party options like Cohere. This makes it an ideal choice for enterprises seeking to consolidate their AI stack, leverage existing AWS security and compliance controls, and simplify billing. The platform is designed for production RAG systems where data privacy and network security are paramount.

The primary advantage of Bedrock is its seamless integration with other AWS services. You can easily connect your embedding workflows to data sources in S3, vector stores in Amazon OpenSearch Serverless, or relational databases like Aurora. This streamlined architecture is a significant benefit for teams that want to build a secure, private, and scalable RAG pipeline using familiar infrastructure. While it offers less model variety than dedicated platforms, its focus on enterprise-grade stability and security makes it a top contender for the best embedding model for RAG deployments within AWS.

Actionable Retrieval Insights

To enable high-performance retrieval at scale, evaluate the Provisioned Throughput pricing model. While on-demand is good for starting, provisioned throughput provides predictable latency and cost for high-volume indexing and query loads. For sensitive data, a critical action is to configure VPC endpoints for Bedrock. This ensures that all API calls to generate embeddings stay within your private AWS network, enhancing security. When choosing a model, be aware that providers like Cohere on Bedrock may have different API signatures than their native versions; always check the Bedrock-specific documentation for correct implementation.

Key Features:

- Multi-Vendor Model Access: A single API to access models from Amazon, Cohere, and other providers.

- Flexible Pricing: Offers both on-demand and provisioned throughput to match usage patterns.

- Enterprise-Grade Security: Deep integration with AWS IAM, VPC, and KMS for robust compliance.

- Native AWS Integration: Simplifies connecting to data sources and vector stores within the AWS cloud.

4. Cohere

Cohere provides a mature, enterprise-focused suite of APIs specifically tuned for building high-performance retrieval systems. Their embedding models, available in both English and multilingual families, are designed from the ground up for semantic search, making them a strong contender for any production RAG application. A key differentiator for Cohere is its native Rerank API, which works in tandem with the embeddings to significantly improve the precision of search results by re-ordering the initial retrieved documents based on relevance.

This two-stage retrieve-and-rerank process is a best practice for advanced RAG and Cohere makes it exceptionally accessible. The platform is also built for enterprise needs, offering private cloud deployments and clear policies for production usage. While their trial keys are rate-limited, production keys unlock the full power of the platform, making it a reliable choice for scalable, business-critical applications.

Actionable Retrieval Insights

For a direct and significant boost in retrieval quality, implement a two-stage retrieval process. First, use the embed-v3 models to retrieve a larger set of candidate documents (e.g., top 25-50). Then, pass these candidates to the rerank endpoint to get a much more accurate top 3-5 results. This is a highly effective strategy for improving RAG accuracy. Also, specify the input_type parameter (search_document or search_query) when calling the embed API to generate specialized vectors that perform better for their intended task. The effectiveness of this system is deeply connected to how documents are prepared; understanding various chunking strategies for RAG is crucial to ensure the reranker has meaningful context to evaluate.

Key Features:

- Integrated Rerank Models: Provides a dedicated API to refine search results, directly boosting RAG quality.

- Task-Specific Embeddings: Use

input_typeto specialize embeddings for documents or queries, improving relevance. - Enterprise-Ready Deployments: Supports private cloud (AWS, GCP) and VPC deployments for enhanced security and compliance.

- Clear API Tiers: Differentiates between trial and production keys with explicit rate limits and lifecycle policies.

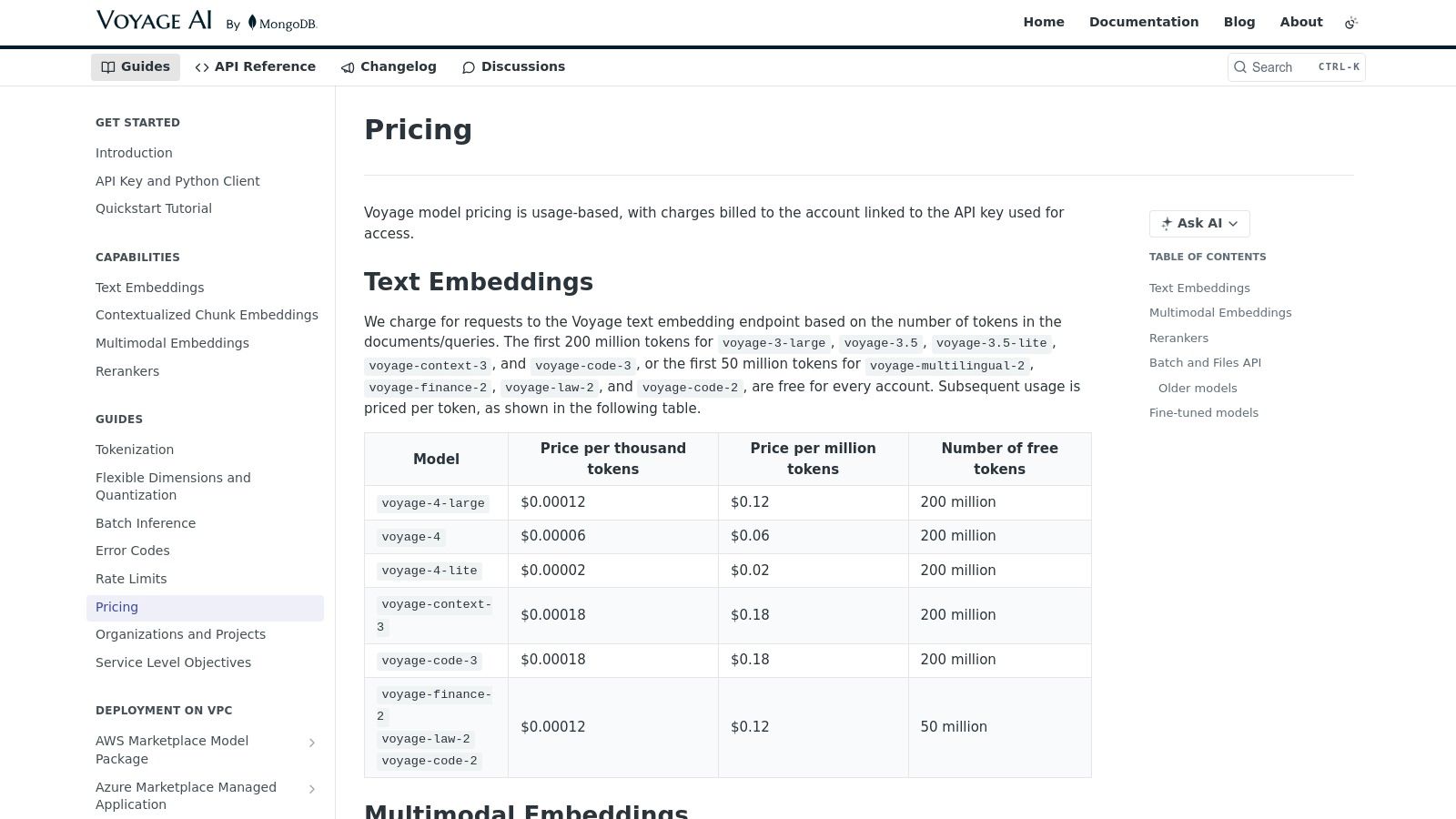

5. Voyage AI

Voyage AI has rapidly emerged as a strong contender, offering high-performance embedding models specifically fine-tuned for RAG applications. Their key differentiator is a focus on specialized, domain-adapted models and an exceptionally generous free tier, making them an attractive option for both startups and large-scale indexing projects. Models like voyage-large-2-instruct are engineered to understand instructional prompts, which can significantly improve retrieval relevance by allowing developers to guide the embedding process for specific use cases.

The platform stands out with its vertical-specific models for finance, law, and code, which can provide a performance edge over general-purpose embeddings without the need for extensive in-house fine-tuning. This specialization, combined with a dedicated model optimized for long-context document retrieval, makes Voyage a pragmatic choice for teams working with complex, domain-heavy information. While its ecosystem support is still growing compared to giants like OpenAI, its cost-performance profile is compelling for developers building the next generation of RAG systems.

Actionable Retrieval Insights

To maximize retrieval accuracy on specialized documents, use their domain-specific models (e.g., voyage-finance-2, voyage-law-2). This is a direct path to better performance without manual fine-tuning. For complex queries, leverage the voyage-large-2-instruct model by prefixing your text with a task-specific instruction, such as "Represent this document for retrieval on legal implications." This guides the model to produce more contextually relevant embeddings. When dealing with large documents, the voyage-large-2 model is effective due to its large context window, but you should still pair it with an intelligent chunking strategy to create focused, retrievable text segments.

Key Features:

- Domain-Specific Models: Pre-trained embeddings for niche areas like law, finance, and code for out-of-the-box performance gains.

- Instructional Fine-Tuning: Models like

voyage-large-2-instructfollow user instructions for more nuanced embeddings. - Generous Free Tier: A significant free token allowance makes it accessible for testing and small-scale deployment.

- Long-Context Optimization: A dedicated model designed to handle and retrieve information from lengthy documents effectively.

6. Jina AI

Jina AI provides a diverse family of open-weight, multilingual, and multimodal embedding models that offer an excellent balance of performance and deployment flexibility. Their models, like jina-embeddings-v2-base-en, are engineered to support long context windows up to 8192 tokens, making them particularly effective for RAG systems that process dense, detailed documents. The key differentiator for Jina is its deployment versatility; developers can use a convenient hosted API or self-host the models via AWS SageMaker and other platforms for greater control.

This flexibility makes Jina an attractive option for teams that need to start with a managed service but plan to migrate to a self-hosted environment for security, compliance, or cost reasons. Their strong performance on multilingual benchmarks also positions them as a solid choice for applications serving a global user base, making it a top contender for the best embedding model for RAG in international contexts.

Actionable Retrieval Insights

To take advantage of Jina's long context window, design your chunking strategy to create larger, more contextually complete chunks (up to 8K tokens) where appropriate. This can improve retrieval for documents where meaning is spread across multiple paragraphs, like legal agreements or technical papers. For self-hosting, deploying via AWS SageMaker Marketplace offers a pre-packaged inference endpoint. An actionable tip is to select a GPU instance size that matches your expected throughput to avoid overspending on idle hardware. Monitor GPU utilization during a bulk indexing job to right-size your instance.

Key Features:

- Long Context Support: Models handle up to 8192 tokens, ideal for creating context-rich embeddings from complex documents.

- Flexible Deployment: Available via a hosted API or self-deployable through AWS and other cloud marketplaces.

- Strong Multilingual Capabilities: High performance across various languages, including bilingual models.

- Ecosystem Integration: Well-supported in major RAG frameworks and vector databases.

7. Nomic

Nomic has carved out a unique position by offering a high-performance, open-weight embedding model, nomic-embed-text-v1.5, that strongly competes on both performance and cost. It provides an excellent option for developers who need full control over their stack or are operating under strict data privacy constraints that necessitate self-hosting. For teams building RAG systems at a massive scale, Nomic’s extremely competitive pricing for its managed API presents a compelling economic advantage over larger, more established providers.

The company’s commitment to transparency is evident through its public leaderboards and open-source model weights, allowing teams to verify performance independently. While the platform is more streamlined compared to hyperscalers, its focus on delivering a superior core embedding model makes it a powerful choice for cost-sensitive and regulated environments.

Actionable Retrieval Insights

For teams with strict data privacy needs, the most direct action is to self-host the nomic-embed-text-v1.5 model. Use a framework like vLLM or Hugging Face's TGI to deploy it on your own infrastructure for maximum control and security. For those using the managed API, its low cost makes it feasible to re-index your entire document corpus frequently as your data or chunking strategies evolve. This agility is a significant advantage. The model also supports Matryoshka Representation Learning (MRL), allowing you to truncate the embedding vectors to a smaller size at query time to trade off a small amount of accuracy for significant speed gains in retrieval.

Key Features:

- Open-Weight Model:

nomic-embed-text-v1.5can be downloaded and self-hosted for complete data sovereignty. - Highly Cost-Effective: Offers one of the lowest prices per million tokens for its managed API service.

- Variable Dimensions (MRL): Shorten embeddings at query time to speed up retrieval without re-indexing.

- Enterprise-Ready: Provides options for VPC and on-premise deployments for enterprise clients.

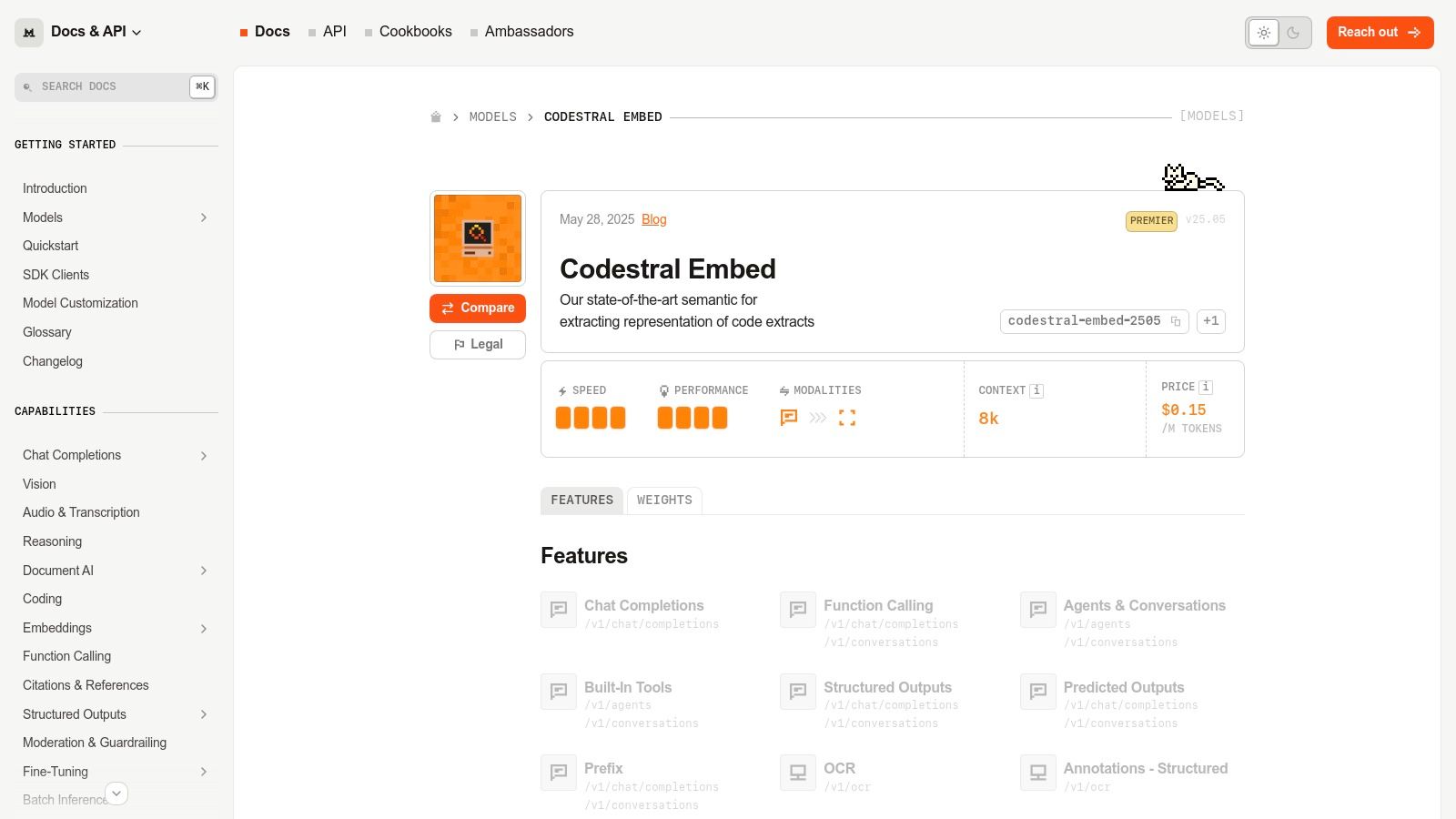

8. Mistral AI

Mistral AI enters the specialized embedding space with its codestral-embed model, a powerful API-based solution purpose-built for code-related retrieval augmented generation. This model is tailored for tasks like semantic search over large code repositories, code completion, and deduplication, making it an excellent choice for developer-facing AI applications. Its strength lies in its deep understanding of programming language syntax and logic, offering more relevant results for code queries than general-purpose text embedding models.

For teams building RAG systems on codebases, Mistral provides a production-ready API with transparent, token-based pricing and clear documentation. While its focus is niche, this specialization is a significant advantage for its target use cases. The platform is designed for developers, offering practical cookbook examples and a modern batching API to handle large-scale indexing efficiently and cost-effectively.

Actionable Retrieval Insights

To get the best retrieval results from codestral-embed, your chunking strategy must be code-aware. Instead of splitting by a fixed token count, parse your source code and create chunks based on logical units like functions, classes, or methods. This ensures each vector represents a meaningful, self-contained block of code. For large-scale repository indexing, always use the batching API endpoint to process multiple code chunks in a single request, which is significantly more efficient and cost-effective than making individual API calls. This is the most critical step for production performance.

Key Features:

- Purpose-Built for Code: Specifically tuned for code retrieval, analytics, and semantic search.

- Production API: Offers a scalable, managed endpoint with modern batching support for bulk inference.

- Developer-Focused: Includes public documentation and cookbook examples for common code retrieval tasks.

- Transparent Pricing: Clear token-based pricing with discounts available for batch processing.

9. Hugging Face

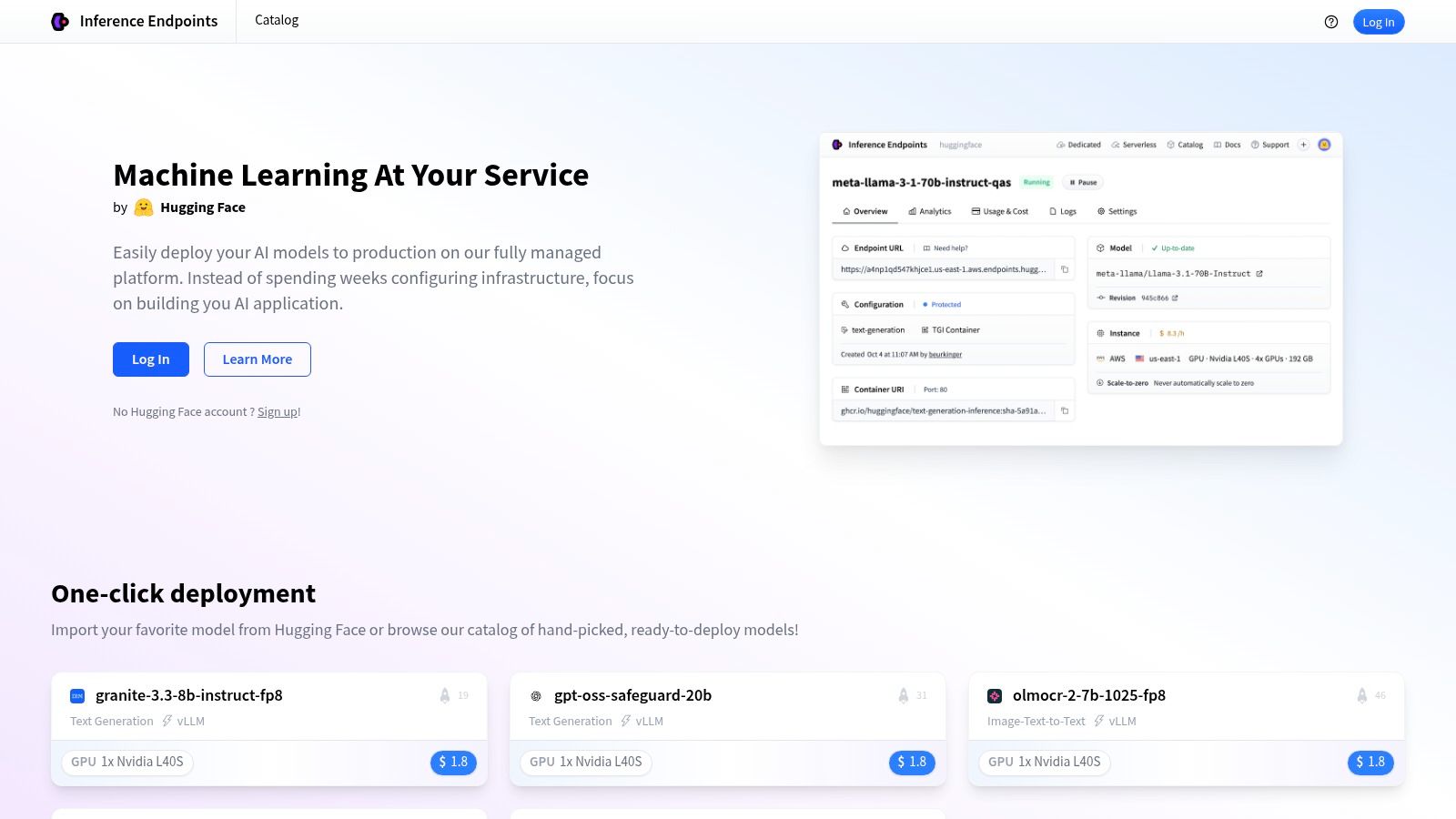

Hugging Face serves as the central hub for open-weight models, offering the largest catalog of embedding solutions perfect for teams that require maximum flexibility and control. It's the ideal platform for discovering, evaluating, and deploying a wide range of models like BGE, E5, and GTE. This makes it an essential resource when you want to A/B test multiple open-source options against your specific dataset to find the best embedding model for your RAG application. Its key value is providing one-click deployment through its Inference Endpoints service.

While the platform grants unparalleled choice, it comes with operational responsibilities. You are in charge of managing instance sizing, autoscaling configurations, and performance tuning. This gives you granular control over the hardware but also means the cost structure is instance-based (per hour) rather than token-based, which can make budget forecasting more complex than with managed APIs.

Actionable Retrieval Insights

To improve retrieval, don't just pick the top model from the leaderboard. Look for models with explicit fine-tuning instructions, like BGE, which recommends prefixing document chunks with a specific phrase ("Represent this sentence for searching relevant passages:") during indexing. This simple instruction can significantly improve retrieval scores. When deploying via Inference Endpoints, start with a smaller instance size and use a load testing tool to determine the throughput you can achieve before scaling up. This prevents overprovisioning and controls costs. For maximum performance, configure the endpoint to use a container with optimizations like FlashAttention.

Key Features:

- Vast Model Catalog: Access thousands of open-weight embedding models for any use case.

- Dedicated Inference Endpoints: Deploy models on dedicated, autoscaling infrastructure with ease.

- Community Benchmarks: Leverage public leaderboards and model evaluations to guide your selection.

- Flexible Hosting: Choose from multiple cloud providers and GPU instances in various regions.

10. Together AI

Together AI provides a cloud platform that serves as a unified gateway to a curated selection of leading open-weight embedding models. For teams looking to experiment with and deploy models like BGE, GTE, and UAE without managing the underlying infrastructure, Together AI offers a compelling solution. It simplifies the process of benchmarking and selecting the best embedding model for RAG by consolidating multiple high-performing options under a single, OpenAI-compatible API and a unified billing system.

This approach is ideal for developers who want the performance benefits of top open models but prefer the convenience of a managed service. The platform's competitive, per-million-token pricing and generous rate limits make it a cost-effective alternative to hosting these models yourself, especially for projects that need to scale quickly.

Actionable Retrieval Insights

The most powerful action you can take on Together AI is rapid A/B testing. Because its API is OpenAI-compatible, you can switch between top-tier open models like BAAI/bge-large-en-v1.5 and thenlper/gte-large by changing just a single line of code (the model name). Run a small batch of your own documents through several models to quickly identify which one performs best on your specific data, rather than relying solely on public benchmarks. For bulk indexing, always batch your API requests. The platform is optimized for batch processing, and failing to do so will result in slower performance and higher costs due to network overhead.

Key Features:

- Curated Model Catalog: Access a variety of popular open embedding models (BGE, e5, GTE) through one API.

- Simplified A/B Testing: An OpenAI-compatible API enables fast, low-effort evaluation of multiple models.

- Simplified Pricing: Straightforward per-million-token pricing across all models.

- Scalable Infrastructure: Offers dedicated endpoints and high rate limits for production workloads.

11. Replicate

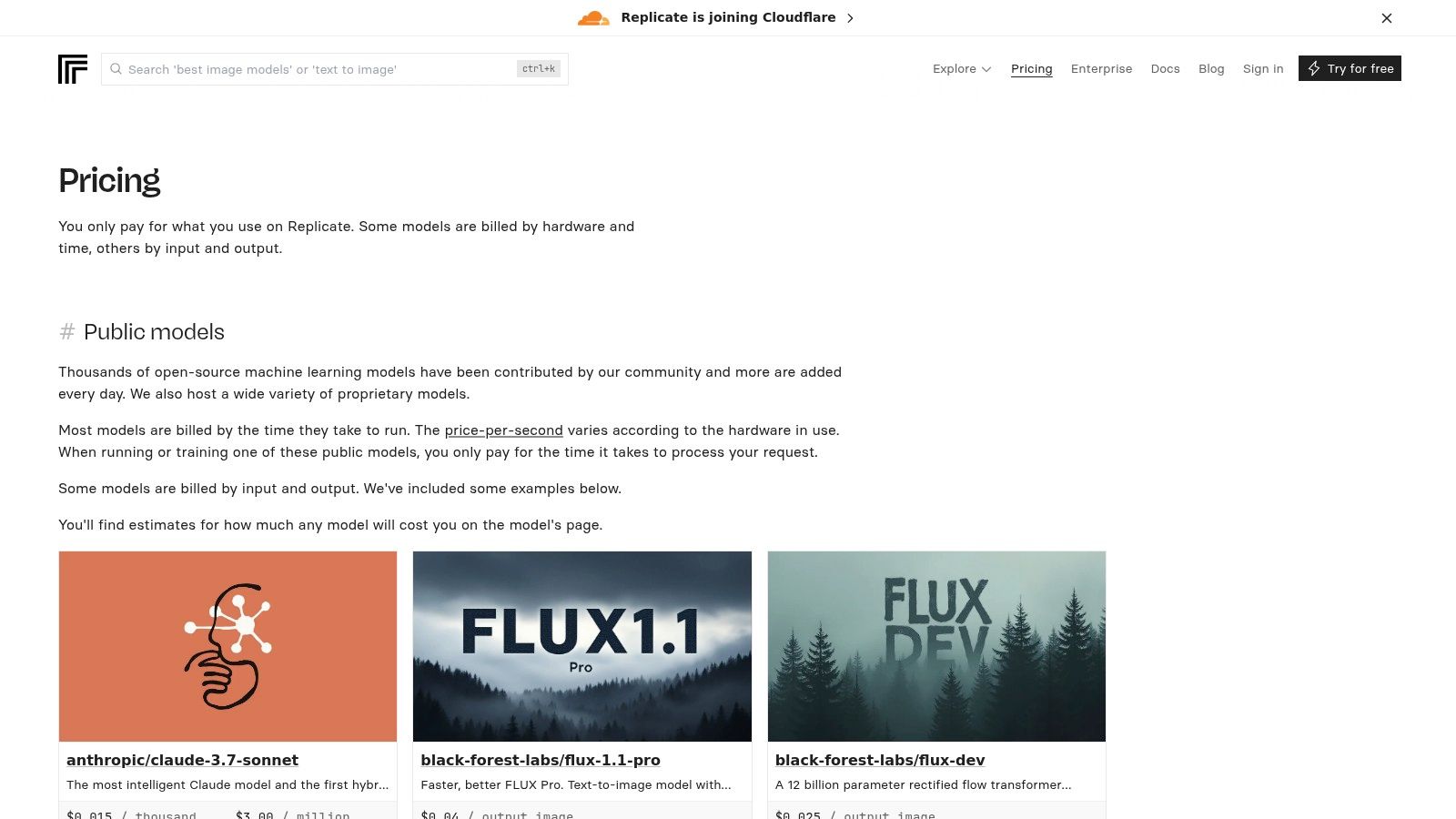

Replicate provides a serverless platform and marketplace for running thousands of open-source models, including a vast array of embedding models well-suited for RAG. Instead of committing to a single model API, Replicate allows you to experiment with and deploy various models via a simple REST API without managing any underlying infrastructure. This makes it an ideal environment for prototyping different embedding strategies or for applications that require a specific open-weight model that isn't available through other managed services.

The platform's key advantage is its flexibility and speed of experimentation. You can find and test a new embedding model in minutes, making it a powerful resource for finding the best embedding model for your RAG use case. While it's great for low-to-medium traffic, factors like cold starts and variable throughput on shared hardware mean it may be less predictable for high-scale, low-latency production indexing compared to dedicated API providers.

Actionable Retrieval Insights

Replicate is best used for experimentation and one-off tasks. An effective action is to use it as a "testing ground" to validate a model's performance on your data before committing to self-hosting it. The per-second billing model means you should optimize your client code to batch inputs to the model wherever its interface allows. This minimizes the number of cold starts you encounter and reduces overhead, directly lowering your cost. For production, consider using their feature to deploy a model on dedicated hardware to eliminate cold starts and ensure consistent performance, though this changes the cost model.

Key Features:

- Vast Model Marketplace: Access to hundreds of open-weight embedding models like BGE, E5, and GTE.

- Per-Second Billing: Pay only for the compute time used, with clear cost estimates provided per model.

- Serverless Deployment: Run models via a simple API without provisioning or managing GPUs.

- Custom Model Support: Deploy your own fine-tuned models using their open-source

Cogframework.

12. MTEB (Massive Text Embedding Benchmark)

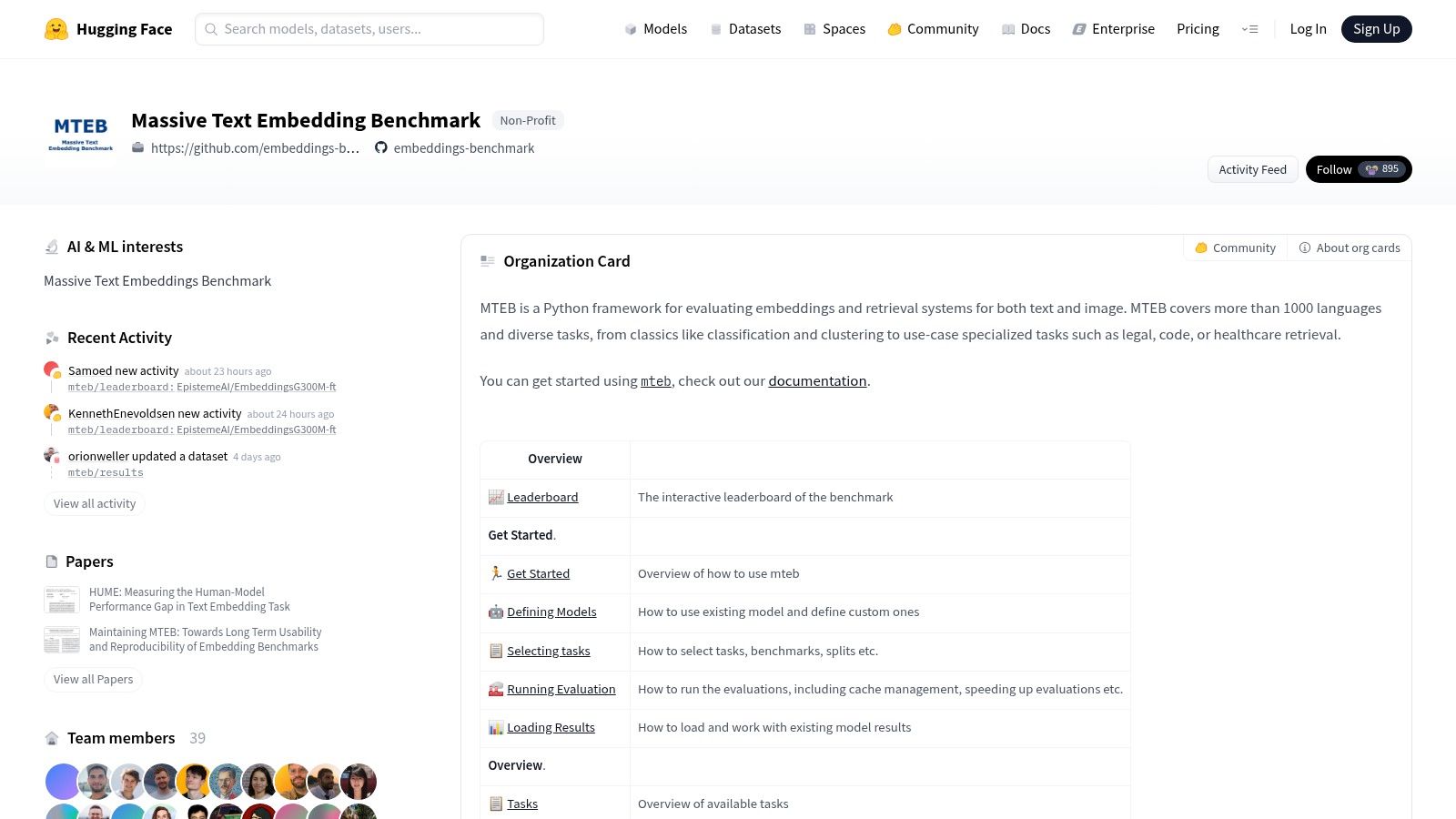

While not an embedding model itself, the Massive Text Embedding Benchmark (MTEB) is an indispensable resource for anyone selecting the best embedding model for RAG. Hosted on Hugging Face, it serves as the de facto public leaderboard, providing a neutral ground to compare the retrieval performance of dozens of models across standardized tasks. It allows developers to make data-driven decisions before committing to a specific model.

The platform is essential for due diligence. You can filter by task (like retrieval) and language to see how different models, from open-source giants to proprietary APIs, stack up. This objective data helps you move beyond marketing claims and see raw performance metrics like nDCG@10, which is highly relevant for RAG quality. While a high MTEB score is a great starting point, remember that these benchmarks may not perfectly mirror the performance on your specific, private corpus.

Actionable Retrieval Insights

The most effective way to use MTEB is to create a shortlist of 3-5 promising candidates based on their retrieval scores, model size, and licensing. Pay close attention to the specific retrieval datasets used in the benchmark; if they align with your domain (e.g., SciFact for scientific papers), the model is more likely to perform well for you. The next critical action is to conduct your own small-scale evaluation on a representative sample of your own data to validate the leaderboard findings. MTEB's open-source toolkit allows you to run the exact same evaluation scripts locally, providing a consistent and reproducible testing methodology.

Key Features:

- Interactive Leaderboards: Filter and sort models by specific tasks, including retrieval, to directly compare relevant performance.

- Open Evaluation Framework: Provides code and documentation to run benchmark tests on your own models or infrastructure.

- Broad Model Coverage: Includes a wide range of open-source and proprietary models, updated frequently by the community.

- Multi-lingual and Multi-domain: Covers a diverse set of languages and retrieval tasks, from general knowledge to domain-specific queries.

Top 12 Embedding Models for RAG — Quick Comparison

| Provider | Core features ✨ | Integration / Ecosystem 🏆 | Target audience 👥 | Quality ★ | Price / Value 💰 |

|---|---|---|---|---|---|

| OpenAI | ✨ Hosted embeddings (large/small), batch API | 🏆 Broad SDK & RAG framework support (LangChain, LlamaIndex) | 👥 Teams wanting stable, scalable hosted embeddings | ★★★★★ | 💰 Token‑based; premium cost per M tokens |

| Google Vertex AI | ✨ Multiple embedding families (Gemini/GTE/E5), batch/online | 🏆 First‑party Vertex Vector Search & GCP integrations | 👥 GCP enterprises needing IAM/regional control | ★★★★ | 💰 Per‑character pricing; GCP credits for trials |

| AWS Bedrock | ✨ Multi‑vendor embeddings (Titan + partners), throughput options | 🏆 Deep AWS security (VPC/KMS) & service integrations | 👥 AWS enterprises requiring consolidated billing/compliance | ★★★★ | 💰 Per‑provider pricing; complex but enterprise‑oriented |

| Cohere | ✨ Multilingual embeddings + rerank models, private deploys | 🏆 Retrieval tooling & enterprise SLAs | 👥 Teams needing rerank + private deployments | ★★★★ | 💰 Clear tiers; some features gated by production keys |

| Voyage AI | ✨ Generous free quota, long‑doc context & multimodal SKUs | 🏆 Vertical models (finance, law, code) | 👥 Large‑scale corpora and vertical use cases | ★★★★ | 💰 Very generous free tier; cost‑effective at scale |

| Jina AI | ✨ Domain‑tuned models; hosted or self‑managed deploys | 🏆 Connectors to vector DBs and RAG frameworks | 👥 Teams needing flexible deployment and connectors | ★★★★ | 💰 Marketplace/instance pricing (hourly) |

| Nomic | ✨ Open weights for self‑hosting, transparent benchmarks | 🏆 Openness for self‑host + regulatory control | 👥 Regulated orgs & large indexing projects | ★★★★ | 💰 Very low list pricing; cost‑efficient for scale |

| Mistral AI | ✨ Code‑focused embeddings (codestral), batching | 🏆 Practical docs and examples for code RAG | 👥 Dev teams building code search/analysis | ★★★★ | 💰 Transparent pricing; batch discounts |

| Hugging Face | ✨ Huge OSS model catalog, Inference Endpoints | 🏆 Best choice to A/B models, community benchmarks | 👥 Teams experimenting across many OSS models | ★★★★★ | 💰 Instance/time billing; flexible but ops overhead |

| Together AI | ✨ Multi open models under one API, per‑M token pricing | 🏆 Quick model swapping and playgrounds | 👥 Teams comparing open models under single bill | ★★★★ | 💰 Competitive per‑M pricing; tiered rate limits |

| Replicate | ✨ Marketplace + serverless hosting for OSS models | 🏆 Fast prototyping; per‑second hardware billing | 👥 Experimenters & quick prototypes | ★★★★ | 💰 Per‑second runtime; good for short runs, less predictable for batches |

| MTEB (benchmark) | ✨ Public leaderboard & reproducible eval toolkit | 🏆 Neutral comparisons across many models/tasks | 👥 Engineers doing model due diligence/A/B tests | ★★★★ | 💰 Free resource; helps avoid costly mis‑picks |

From Theory to Practice: Testing and Tuning Your Embeddings

Navigating the landscape of embedding models can feel overwhelming, but as we've explored, the journey from theoretical benchmarks to practical, high-performing Retrieval-Augmented Generation (RAG) is a systematic process. This article has detailed a wide array of options, from the powerful, proprietary APIs of OpenAI, Cohere, and Google to the flexible, open-source models available through Hugging Face and Together AI. We’ve seen how specialized providers like Voyage AI and Jina AI carve out niches in performance and long-context handling, while platforms like AWS Bedrock and Replicate offer scalable infrastructure for production workloads.

The central takeaway is clear: there is no single best embedding model for RAG that universally outperforms all others in every scenario. The ideal choice is inextricably linked to your specific project constraints, data characteristics, and performance goals. An early-stage startup might prioritize the rapid development and low upfront cost of a model like text-embedding-3-small, while a large enterprise handling sensitive data will gravitate towards self-hosting a model like Nomic Embed or a fine-tuned BGE model for maximum control and security.

Key Takeaways for Optimizing Your RAG Pipeline

Making the right choice involves a holistic view of your system. Here are the most critical factors to carry forward:

- Benchmarks are a Guide, Not a Guarantee: The MTEB leaderboard provides an excellent starting point for shortlisting candidates, but these scores are generated on standardized, academic datasets. Your internal documents, with their unique jargon, structure, and noise, represent the real test environment. A model that excels on Wikipedia articles might struggle with your legal contracts or engineering logs.

- The Trade-off Triangle is Real: You will constantly balance performance (retrieval accuracy), latency (speed), and cost (compute/API fees). High-dimensional models from providers like Voyage AI might offer top-tier accuracy but come with higher operational costs and storage requirements in your vector database. Conversely, a smaller, quantized model can dramatically reduce latency and cost at a potential trade-off in retrieval precision.

- Chunking is Your First and Best Tuning Lever: The way you segment your documents before embedding them has a profound impact on retrieval quality. A suboptimal chunking strategy can undermine even the most powerful embedding model. The wrong split can sever critical context, making a chunk semantically meaningless and impossible to retrieve accurately. Experimentation here is not optional; it’s essential.

- Metadata Unlocks Advanced Retrieval: Embeddings are powerful, but they aren't a silver bullet. Enriching your chunks with structured metadata, such as document source, creation date, author, or even AI-generated summaries and keywords, enables a powerful hybrid search approach. This allows you to filter results at the vector database level, drastically narrowing the search space and improving the relevance of the final context provided to the LLM.

Your Actionable Path Forward

So, where do you go from here? The path to a production-ready RAG system is paved with iterative testing and evaluation. Start by selecting two or three promising models from our list based on your project's unique profile. Set up a "bake-off" environment where you can systematically compare their performance on a representative sample of your own data.

Focus your evaluation not just on the models themselves but on the entire preprocessing pipeline. Test different chunking strategies, such as fixed-size, sentence-splitting, or semantic chunking. Analyze how the choice of distance metric, like cosine similarity versus dot product, affects your results. This hands-on evaluation is the only way to find the configuration that truly maximizes retrieval performance, turning your theoretical understanding into a practical, high-performing application that delivers accurate, contextually relevant answers.

Ready to move from theory to practice? The most significant gains in RAG performance often come from perfecting your chunking strategy, not just swapping models. ChunkForge provides the visual tools you need to inspect, iterate, and perfect your document splitting and metadata enrichment workflows. Stop guessing and start seeing how your data is prepared for embedding by visiting ChunkForge today.