10 Best Practices in Knowledge Management to Supercharge RAG Retrieval

Discover 10 actionable best practices knowledge management techniques to improve retrieval in RAG systems. Boost your AI's performance with expert insights.

Retrieval-Augmented Generation (RAG) has revolutionized how AI interacts with proprietary data, but its effectiveness hinges on a single, often-overlooked foundation: knowledge management. Simply dumping documents into a vector database is a recipe for irrelevant results, context fragmentation, and costly hallucinations. The difference between a proof-of-concept RAG and a production-ready system lies in the deliberate, strategic preparation of your knowledge assets. An ad-hoc approach leads to poor retrieval, which directly undermines the quality of generated answers for your RAG system.

This article moves beyond generic advice to provide a comprehensive roundup of the 10 most impactful best practices knowledge management techniques specifically tailored for optimizing retrieval quality. We will cover actionable strategies designed to transform raw documents into high-performance, retrieval-friendly assets that power accurate, reliable, and trustworthy AI. Mastering these techniques is the core of a new discipline. To truly move beyond traditional keyword-based approaches, mastering Answer Engine Optimization (AEO) is a best practice for ensuring your RAG system leverages its knowledge effectively.

You will learn how to implement advanced methods for preparing, structuring, and maintaining your data for enhanced retrieval. We’ll explore everything from semantic chunking and deep metadata enrichment to traceability and continuous monitoring. Each practice is designed to give you precise control over how information is found and used by your LLM, ensuring your RAG system delivers value instead of frustration. This guide provides the tactical framework needed to build a robust knowledge pipeline that delivers consistently superior retrieval performance.

1. Semantic Chunking with Contextual Awareness

Effective knowledge management for RAG requires preparing content for optimal machine comprehension, not just human readability. Semantic chunking is a sophisticated document segmentation strategy that moves beyond arbitrary rules, like fixed character counts. Instead, it intelligently groups text based on its meaning and conceptual coherence, which is a cornerstone of modern best practices in knowledge management.

This method preserves the complete context of an idea within a single chunk, which is critical for Retrieval-Augmented Generation (RAG) systems. When a query is made, the system retrieves entire meaningful passages rather than fragmented sentences, drastically improving the quality and relevance of the information fed to the Large Language Model (LLM). This directly enables more accurate, contextually aware, and useful AI-generated responses.

Why It's a Best Practice

Simple, fixed-size chunking often splits critical information apart, leading to poor retrieval. For instance, a sentence describing a problem might be separated from the sentence detailing its solution, making it impossible for a RAG system to retrieve the full context. Semantic chunking prevents this by identifying natural topic boundaries, ensuring that conceptually related information stays together. This approach is particularly effective for complex documents like legal contracts or detailed technical manuals where context is everything. Platforms like Langchain now include semantic chunking implementations specifically for building robust RAG pipelines. For a deeper technical dive, you can explore a complete guide to understanding semantic chunking and its impact on retrieval accuracy.

Actionable Implementation Tips

To apply this technique effectively, focus on both strategy and tooling for improved RAG retrieval.

- Test Boundaries with Your Embedding Model: The effectiveness of semantic chunks depends on how your chosen embedding model interprets them. A chunk that seems coherent to a human may not translate well into a distinct vector. Always test and validate chunking output against your specific embedding model to ensure optimal retrieval performance.

- Combine with Metadata for Hybrid Search: Enhance retrieval by pairing semantic chunks with rich metadata. Tag chunks with topics, dates, or document sources. This enables a powerful hybrid search where the RAG system can pre-filter by metadata before performing a semantic search on the narrowed-down results.

- Adjust Overlap Strategically: Use a small, deliberate overlap between semantic chunks. This helps maintain a continuous flow of context for the LLM without introducing redundant information, creating a bridge for queries that fall between two distinct but related ideas.

- Use for Complex Documents: Reserve semantic chunking for your most topic-dense documents. For highly structured content, like simple Q&A lists, a more straightforward fixed-size or token-based approach might be sufficient and more efficient for retrieval.

2. Deep Metadata Enrichment and Schema-Based Extraction

To elevate a knowledge base from a simple text repository to an intelligent, queryable asset, deep metadata enrichment is essential. This practice involves augmenting content chunks with structured, descriptive data far beyond basic tags. It transforms raw text into smart, filterable objects, forming a critical layer of modern best practices in knowledge management for RAG systems.

This approach uses predefined schemas to extract and attach vital information like summaries, keywords, named entities (e.g., people, organizations), and custom attributes directly to each chunk. For a Retrieval-Augmented Generation (RAG) system, this means queries can be filtered with surgical precision before semantic search even begins. Instead of just searching for similar text, the system can first narrow the search space to chunks from a specific department, created within a certain date range, or containing a particular legal entity, dramatically improving retrieval accuracy and relevance for the LLM.

Why It's a Best Practice

Naive keyword or vector search often fails in complex enterprise environments where context is paramount, leading to poor RAG performance. A query for "Q3 performance review" might pull up documents from every department and year. With schema-based metadata, the same query can be filtered to "Department: Sales, Year: 2023, Document-Type: Performance-Review," ensuring only the most relevant chunks are sent to the LLM. This multi-dimensional filtering is crucial for building robust RAG systems that deliver reliable answers. Platforms like ChunkForge now support custom JSON schema extraction, while vector databases like Pinecone and Weaviate are built to leverage this rich metadata for powerful hybrid search.

Actionable Implementation Tips

Implementing deep metadata for RAG requires a systematic, schema-driven approach.

- Design Schemas Collaboratively: Work with domain experts to define a metadata schema that captures the essential attributes of your documents. Ask: what information is critical for filtering and providing context to the RAG system?

- Automate Extraction: Use LLMs or specialized tools for automated metadata extraction, such as identifying key entities or generating summaries. For a deeper understanding of the techniques involved, explore how Named Entity Recognition powers this process.

- Implement Validation Rules: Ensure metadata consistency by implementing validation rules in your ingestion pipeline. This prevents issues like inconsistent date formats or misspelled category tags that can break the filtering logic in your RAG's retrieval step.

- Use Metadata for Both Filtering and Context: Pass key metadata along with the chunk content to the LLM. This provides crucial context (e.g., "Source: Engineering Handbook, Last Updated: May 2024") that helps the model generate more grounded and trustworthy responses.

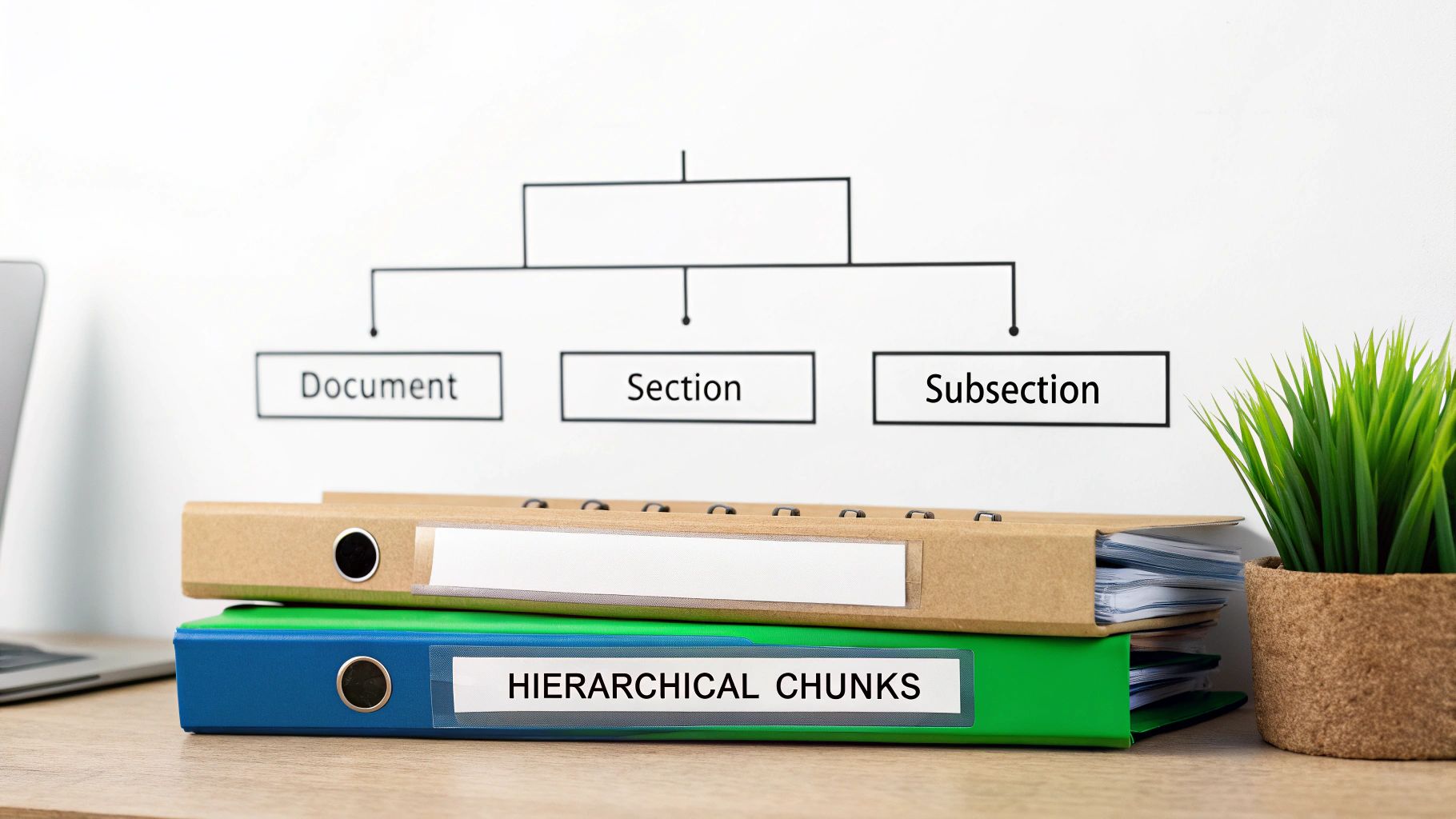

3. Hierarchical Chunking with Multi-Level Granularity

While semantic chunking excels at capturing conceptual meaning, hierarchical chunking introduces structural intelligence to the retrieval process. This strategy segments documents into multiple nested levels (e.g., document → section → subsection → chunk), preserving their inherent relationships. This is a critical component of advanced best practices in knowledge management for structured content, as it enables RAG systems to operate at different granularities.

Instead of treating all chunks as a flat list, this method maintains parent-child links, allowing a Retrieval-Augmented Generation (RAG) system to fetch a high-level overview or a specific, fine-grained detail based on the query. For a broad question, it might retrieve a whole section summary; for a specific one, it can pinpoint a single paragraph within that section. This multi-resolution approach is invaluable for navigating long documents like technical manuals or legal contracts where context scale is crucial for relevance.

Why It's a Best Practice

A flat chunking approach loses the valuable structural context embedded in a well-organized document. Hierarchical chunking retains this structure, allowing the RAG system to understand that a specific code example belongs to a particular API function, which is part of a larger software development kit. This enables more sophisticated retrieval strategies, such as "small-to-big" retrieval, where small, precise chunks are first identified, and then their parent chunks are retrieved to provide broader context to the LLM. This prevents the model from receiving isolated snippets, leading to more comprehensive and well-grounded answers.

Actionable Implementation Tips

To implement this structure for your RAG system, focus on mapping the document's logic before ingestion.

- Map Document Structure: Before processing, analyze your content's native structure (e.g., chapters, H1/H2/H3 tags, clauses). Use this blueprint to define your chunking hierarchy and ensure the parent-child relationships are correctly captured during ingestion.

- Embed Hierarchical Context: Enhance each small chunk by including metadata that references its parent. For example, add the title of the parent section to the metadata of each child chunk. This enriches the vector representation with crucial structural context for retrieval.

- Test Different Granularity Levels: Evaluate retrieval performance against your typical user queries by fetching different levels of the hierarchy. Some questions are best answered by a subsection, while others require the context of an entire chapter to generate an accurate response.

- Implement Smart Context Collapsing: Develop logic to intelligently combine a small, relevant chunk with its parent or sibling chunks to fill the LLM's context window. This "small-to-big" retrieval strategy avoids sending redundant information while still providing a complete picture.

4. Chunk Window and Overlap Optimization

Tuning chunk size and the degree of content repetition between adjacent chunks is a critical lever for balancing context preservation with retrieval efficiency. Chunk window and overlap optimization moves beyond a "one-size-fits-all" approach, recognizing that different document types, embedding models, and LLM context windows require unique strategies. This is a fundamental aspect of modern best practices in knowledge management for AI.

By strategically introducing a small overlap, typically between 10-20%, subsequent chunks gain crucial context from their predecessors. This technique creates a "context bridge" that prevents key information from being split across disconnected chunks, significantly improving the coherence of passages retrieved by the RAG system to answer a complex query. This methodical tuning is essential for building a high-performing RAG system that delivers accurate and complete answers.

Why It's a Best Practice

Without optimized overlap, a critical sentence can be fragmented at a chunk boundary, causing the RAG retrieval system to miss the full picture. For example, a query might retrieve a chunk describing an effect, while the chunk detailing its cause is overlooked. Strategic overlap ensures that such relationships are maintained. This empirical approach requires iterative testing and refinement based on retrieval quality metrics, but the payoff is a system that handles nuanced queries far more effectively, especially with dense material like technical documentation or financial reports.

Actionable Implementation Tips

Fine-tuning your chunking strategy requires experimentation and a clear understanding of your RAG system's components.

- Establish a Baseline and Iterate: Start with a common configuration, such as 256-512 token chunks with a 10-20% overlap. Use this as your baseline and systematically test variations against a defined set of evaluation queries to measure the impact on RAG retrieval quality.

- Align with Your LLM's Context Window: Ensure your chunk size is appropriate for your LLM. A good rule of thumb is to keep chunks small enough that you can fit several (e.g., 3-5) into the LLM's context window, providing rich context for the generation step.

- Test Against Domain-Specific Queries: Generic tests are insufficient. Evaluate different window and overlap configurations using sample queries that are representative of your actual users' needs to find the optimal setup for your specific RAG application.

- Document and Standardize Configurations: Once you identify an optimal configuration for a specific document type or use case, document it. This ensures consistency across your knowledge base and makes the RAG system's retrieval behavior predictable and easier to maintain.

5. Traceability and Source Attribution Mapping

In a modern knowledge management system powering AI, simply having the right information is not enough; you must be able to prove where it came from. Traceability mapping creates explicit, verifiable links between processed data chunks and their exact origin in the source document, including page numbers or section references. This practice is a non-negotiable component of trustworthy AI and one of the most critical best practices in knowledge management.

Losing this source attribution makes it impossible for users or auditors to verify AI-generated outputs from your RAG system. By maintaining a clear chain of evidence, organizations ensure that every piece of information an LLM uses can be traced back to its source, which is fundamental for fact-checking, ensuring compliance, and building user confidence in Retrieval-Augmented Generation (RAG) systems.

Why It's a Best Practice

An AI that cannot cite its sources is a black box. For applications in regulated industries like finance or medicine, this is a deal-breaker. Traceability transforms a RAG system from a potential source of misinformation into a reliable research assistant. It allows a medical AI to cite specific passages from clinical trials or a legal tech tool to point to the exact clause in a contract that informed its analysis. This capability is essential for any production-grade RAG application where accuracy and accountability are paramount.

Actionable Implementation Tips

To build a robust traceability framework for RAG, you must embed attribution into every step of your data pipeline.

- Export Traceability Metadata: When processing and chunking documents, ensure your pipeline automatically captures and stores source metadata. This should include the filename, page number, and section heading alongside the chunk content itself to be passed to the RAG application.

- Implement Citation Rendering: Your RAG application's user interface must be designed to display citations clearly. Allow users to easily click a reference in the AI-generated response and be taken directly to the corresponding section of the source document for verification.

- Use Visual Verification Tools: Before exporting chunks into your vector database, use tools that provide a visual overlay to confirm that the text content and its location metadata are perfectly aligned. This prevents incorrect attributions from degrading RAG system trust.

- Document Your Traceability Schema: Create and maintain clear documentation for your traceability metadata schema. This ensures consistency across different data sources and ingestion pipelines as your RAG knowledge base grows.

6. Format-Specific Chunking Strategies (PDF, Markdown, Tables)

Not all content is created equal, and a one-size-fits-all chunking approach can destroy the integrity of structured documents. Format-specific chunking recognizes that PDFs, Markdown files, and documents with tables or code blocks require specialized parsing. This advanced technique is one of the most critical best practices in knowledge management for preventing the loss of vital structural context during RAG ingestion.

This method employs distinct parsers and segmentation logic for each file type. For example, it ensures a table in a PDF is treated as a single, coherent unit rather than being fragmented into nonsensical rows. Similarly, it preserves the syntax and atomicity of code blocks in technical documentation. By respecting the inherent structure of the source material, this strategy provides the RAG system with clean, well-organized, and contextually complete information, significantly boosting retrieval accuracy.

Why It's a Best Practice

A generic text splitter fails to understand the semantic importance of formatting. It might split a multi-row table entry, separate a code function from its comments, or discard the hierarchical relationships defined by Markdown headers, leading to flawed retrieval. Format-specific chunking prevents this by using intelligent parsers that can identify and isolate these structured elements. This is crucial for knowledge bases built from diverse sources like financial reports or technical manuals, where tables and code are essential for comprehension. For a deeper look into handling one of the most common formats, explore this guide on converting PDFs to structured Markdown.

Actionable Implementation Tips

To implement format-specific chunking for RAG, build a pipeline that identifies document types and routes them to the correct parser.

- Preserve Code Blocks as Atomic Units: Treat code snippets and their accompanying explanations as indivisible chunks. This ensures that when a developer asks a question, the RAG system retrieves the full, functional code example, not a fragmented piece of syntax.

- Keep Tables Intact: Never split a table across multiple chunks. Extract the entire table, convert it to a structured format like Markdown, and store it within a single chunk. Add a descriptive summary to the associated metadata to improve its retrievability.

- Leverage Markdown and HTML Structure: Use heading levels (H1, H2, etc.) in Markdown or HTML as natural semantic boundaries for chunking. This aligns your chunks with the document's logical topic structure as intended by the author, improving retrieval for section-specific queries.

- Validate Parsing Accuracy: Before bulk processing, run a representative sample of each document type through your parsing pipeline. Manually inspect the output to ensure tables, lists, and other structures are preserved correctly and without errors that would harm retrieval.

7. Adaptive & Federated Chunking Strategies

Going beyond a one-size-fits-all approach, advanced knowledge management combines adaptive and federated chunking to handle diverse content types with precision. This sophisticated strategy treats document segmentation not as a single task, but as a dynamic process tailored to both content structure and knowledge domain. This level of customization represents one of the most powerful best practices in knowledge management for enterprise-scale RAG.

Adaptive chunking dynamically adjusts segment size based on the local complexity of the content, creating smaller chunks for dense sections and larger ones for narrative passages. Federated chunking applies entirely different, domain-optimized strategies for distinct content types. For example, it might use a clause-based chunker for legal contracts and a code-aware chunker for technical documentation, all within the same knowledge base. This dual approach ensures that every piece of information is optimally prepared for high-quality retrieval.

Why It's a Best Practice

A single chunking strategy rarely performs optimally across heterogeneous data sources like legal contracts and marketing reports. Adaptive chunking prevents information loss in complex sections, like the methodology of a research paper, by using smaller, focused chunks. Meanwhile, federated strategies ensure that domain-specific structures, like financial tables or legal clauses, are parsed intelligently rather than being awkwardly split. This tailored processing significantly boosts retrieval relevance by ensuring chunks are both contextually complete and appropriately granular for their specific domain.

Actionable Implementation Tips

To implement this advanced strategy for better RAG retrieval, start small and build complexity methodically.

- Define Domain-Specific Heuristics: Work with domain experts to define what makes content "complex" in their field. For legal text, it might be clause density; for technical docs, it could be the frequency of code blocks. Use these heuristics to drive your adaptive logic.

- Implement a Routing Mechanism: Build a "router" in your ingestion pipeline that identifies the document type (e.g., based on metadata or content analysis) and directs it to the appropriate federated chunking logic for processing.

- Start with Two Domains: Begin by developing and testing federated strategies for just two distinct and high-value content domains. This allows you to refine the process and demonstrate improved retrieval quality before scaling.

- Log Complexity for Refinement: In your adaptive strategy, log the calculated complexity scores for each chunk. This data is invaluable for analyzing retrieval performance and iteratively refining the algorithms that determine chunk size and boundaries.

8. Quality Assurance and Retrieval Testing Frameworks

Guesswork has no place in a high-performing knowledge base for RAG. Establishing a robust Quality Assurance (QA) and retrieval testing framework is essential for ensuring that your system consistently delivers accurate and relevant information. This involves systematically evaluating your entire data pipeline, from chunking quality to retrieval effectiveness. Implementing this is one of the most critical best practices in knowledge management for reliable AI.

This evidence-based approach moves beyond assumptions by using empirical data to validate your system's performance. Frameworks like RAGAS and TruLens allow you to run automated tests that measure how well your chunks are retrieved for relevant queries, whether they are self-contained, and if they provide sufficient context. By identifying problematic chunks and suboptimal configurations early, you can build a trustworthy and effective Retrieval-Augmented Generation (RAG) system.

Why It's a Best Practice

Without systematic testing, you are flying blind. A chunking strategy that seems logical on paper might perform poorly with your specific embedding model and user queries, leading to poor RAG outputs. A QA framework provides the necessary feedback loop to diagnose and fix retrieval issues. It allows you to compare different chunking strategies or embedding models using concrete metrics like recall, precision, and Mean Reciprocal Rank (MRR). This ensures your knowledge base isn't just a repository of data but a finely tuned engine for accurate information retrieval.

Actionable Implementation Tips

Integrate a continuous evaluation process into your knowledge management workflow to improve RAG retrieval.

- Build a Golden Test Set: Curate a representative set of queries and their ideal corresponding document chunks. This "golden set" should reflect real-world use cases and serves as the benchmark for all automated retrieval testing and regression analysis.

- Define and Track Key Metrics: Align your success metrics with business outcomes. Use established information retrieval metrics like Normalized Discounted Cumulative Gain (NDCG) for ranking quality and context relevance metrics from frameworks like RAGAS to measure the faithfulness of generated answers.

- Automate Regression Testing: Implement automated tests within your CI/CD pipeline to run whenever you update your chunking logic, embedding models, or source documents. This catches regressions early and prevents a decline in retrieval quality over time.

- Involve Domain Experts: While automated metrics are crucial, human evaluation is irreplaceable. Regularly involve subject matter experts to review retrieved chunks and LLM responses for nuance and contextual accuracy that automated systems might miss.

9. Version Control and Change Management for Knowledge Assets

Adopting a software engineering mindset for your knowledge base is a crucial step in modernizing your strategy. Version control treats documents, their chunking configurations, and associated metadata as versioned assets. This approach, a cornerstone of best practices in knowledge management, recognizes that your knowledge base is not static; source documents are updated, and ingestion pipelines are refined.

This practice enables teams to track changes, rollback to previous states if an update degrades RAG retrieval performance, and maintain a complete audit trail. For Retrieval-Augmented Generation (RAG) systems, this is vital. A change in a chunking strategy can have a profound impact on response quality. Without versioning, diagnosing and reversing a problematic deployment becomes a difficult, manual process.

Why It's a Best Practice

Uncontrolled changes to knowledge assets introduce instability and unpredictability into RAG systems. A seemingly minor document update or a new metadata tag can inadvertently degrade retrieval accuracy for critical queries. By implementing version control, you create a stable, auditable, and reproducible environment, which is especially important in regulated industries where maintaining a traceable history of information is a compliance requirement.

This systematic approach allows for safe experimentation. Teams can test a new chunking algorithm on a separate branch without impacting the production knowledge base. If the new approach proves superior for retrieval, it can be systematically merged and deployed. This mirrors the CI/CD workflows used in software development, bringing the same level of discipline and reliability to knowledge management.

Actionable Implementation Tips

To effectively integrate versioning for RAG, adapt tools and processes from the software and MLOps worlds.

- Use Git for Configurations: Store your ingestion pipeline scripts, chunking configurations, and metadata schemas in a Git repository. This provides a clear history of every change made to your knowledge processing logic that impacts RAG retrieval.

- Tag Versions Meaningfully: Instead of relying on commit hashes, tag key versions with descriptive names (e.g.,

semantic-chunking-v2-20241026). This makes it easier to identify and roll back to specific, stable states of your knowledge base. - Implement Staging Environments: Create a staging environment to test changes to your knowledge assets. Run an evaluation suite of "golden queries" against the staged version to ensure retrieval quality has not regressed before promoting to production.

- Maintain Historical Chunks: For audit and compliance, consider retaining historical versions of chunks alongside the current ones. This allows you to reconstruct the exact information available to the RAG system at any given point in time.

10. Continuous Monitoring and Performance Optimization of Chunking Pipelines

Viewing your knowledge ingestion process as a static, one-time setup is a common pitfall. A core tenet of modern best practices in knowledge management is treating chunking and embedding pipelines as dynamic, production-grade systems that require continuous observation and refinement. This involves actively monitoring their performance to prevent silent failures and ensure the long-term health of your RAG system's retrieval component.

Continuous monitoring means instrumenting your entire pipeline, from initial document parsing to final embedding generation. By tracking key metrics, you can detect when chunking strategies degrade, when structural changes in source documents break your logic, or when embedding models begin to drift. This proactive approach ensures that the knowledge base fed to your LLM remains high-quality, relevant, and accurate over time, directly impacting retrieval performance and user trust.

Why It's a Best Practice

A "set it and forget it" attitude towards chunking pipelines inevitably leads to a decline in retrieval quality. Source documents evolve, new content types are introduced, and user query patterns shift. Without monitoring, these changes introduce subtle bugs that manifest as irrelevant search results or increased AI hallucinations from your RAG system.

By implementing observability, you transform this reactive process into a proactive one. For instance, an enterprise RAG system might use a Grafana dashboard to track retrieval success rates per document type. A sudden drop could immediately signal an issue with a recent change in that document’s formatting, allowing for a swift fix before it impacts users. This methodology, borrowed from MLOps and production data engineering, is essential for maintaining a reliable knowledge retrieval system at scale.

Actionable Implementation Tips

To effectively monitor your chunking pipelines for RAG, focus on establishing a clear framework for observation and response.

- Define Business-Aligned Success Metrics: Go beyond technical metrics. Track outcomes that matter, such as retrieval success rates, user feedback scores on AI answers, or the rate of "I don't know" responses from your RAG system.

- Instrument with Structured Logging: Implement structured logging throughout your pipeline. This allows you to easily query and analyze events, such as chunk size distribution, metadata extraction failures, or embedding generation errors that affect retrieval.

- Create Stakeholder Dashboards: Build and share dashboards that visualize key metrics like retrieval relevance, latency, and chunking errors. Make this data visible to product managers, knowledge managers, and developers to foster a shared sense of ownership.

- Set Up Proactive Alerts: Configure alerts for significant metric degradation. For example, trigger an alert if the average cosine similarity score for top-retrieved chunks drops below a predefined threshold for more than an hour.

- Schedule Regular Performance Reviews: Dedicate time (e.g., bi-weekly or monthly) to review monitoring data, identify trends, and prioritize optimization tasks for your RAG pipeline. This ensures continuous improvement rather than just reactive firefighting.

Comparison of 10 Knowledge Chunking Best Practices

| Strategy | 🔄 Implementation complexity | ⚡ Resource requirements | ⭐ Expected outcomes (quality) | 📊 Ideal use cases | 💡 Key advantages (tips) |

|---|---|---|---|---|---|

| Semantic Chunking with Contextual Awareness | High — embedding models & NLP pipeline integration | Medium–High — compute for embeddings, model infra | ⭐⭐⭐⭐ — Superior retrieval relevance; coherent context | Multi-topic docs, RAG pipelines, legal & medical corpora | Preserves conceptual coherence; reduces false positives |

| Deep Metadata Enrichment & Schema-Based Extraction | High — schema design + extraction workflows | High — NLP extractors, storage for rich metadata | ⭐⭐⭐⭐ — Dramatically improves precision & filterability | Compliance, legal discovery, enterprise search, audits | Enables faceted filtering and explainability; design schemas iteratively |

| Hierarchical Chunking with Multi-Level Granularity | High — parent-child tracking & query routing | Medium — additional indexing and storage overhead | ⭐⭐⭐⭐ — Flexible granularity; better multi-hop reasoning | Long technical docs, manuals, research papers, contracts | Supports coarse-to-fine retrieval and progressive summarization |

| Chunk Window & Overlap Optimization | Medium — tuning and empirical testing | Medium — increased storage/indexing due to overlap | ⭐⭐⭐ — Reduces boundary fragmentation; improves coherence | Pipelines tuned to specific LLM context windows | Empirically tune chunk size/overlap; visualize impact before export |

| Traceability & Source Attribution Mapping | Medium–High — provenance mapping & overlays | Medium — metadata storage, UI for source navigation | ⭐⭐⭐⭐ — Enables verification, auditability, reduced hallucination | Regulated industries, fact-checking, legal/medical workflows | Essential for provenance; always export traceability metadata |

| Format-Specific Chunking (PDF, Markdown, Tables) | Medium — multiple parsers & format handlers | Medium — format tooling and edge-case handling | ⭐⭐⭐ — Preserves structural semantics; fewer parsing errors | PDFs, Markdown repos, code docs, tables/CSV-heavy content | Preserve code blocks/tables as atomic units; validate parsers |

| Adaptive & Federated Chunking Strategies | Very High — dynamic logic + domain templates | High — content analysis, domain configs, expert input | ⭐⭐⭐⭐ — Optimizes chunk quality across heterogeneous data | Enterprise multi-domain KBs (legal, medical, finance) | Start simple; apply adaptive only to high-value domains |

| Quality Assurance & Retrieval Testing Frameworks | Medium — test suite + metric instrumentation | Medium — labeled queries, evaluation compute | ⭐⭐⭐⭐ — Prevents low-quality deployments; measurable gains | Production RAG, A/B testing of chunking strategies | Build representative query sets and automate regression tests |

| Version Control & Change Management for Knowledge Assets | Medium — VCS integration and workflows | Low–Medium — storage for versions, CI/CD tooling | ⭐⭐⭐ — Safe experimentation; audit trails and rollbacks | Teams iterating on configs; regulated environments | Tag meaningful versions; use branching for experiments |

| Continuous Monitoring & Performance Optimization | Medium–High — telemetry, dashboards, alerts | Medium–High — monitoring infra, logging, dashboards | ⭐⭐⭐⭐ — Detects drift; maintains long-term retrieval quality | Long-lived production systems and enterprise KBs | Define business-aligned metrics and set alerts for degradation |

From Raw Documents to Retrieval-Ready Assets

The journey from a chaotic repository of raw documents to a meticulously curated collection of retrieval-ready assets is the cornerstone of high-performing RAG systems. Implementing the best practices for knowledge management detailed in this article is not merely an optimization step; it is a fundamental shift in how we prepare our data for AI. We've moved beyond the rudimentary approach of simply splitting text and now embrace a more sophisticated, strategic discipline. This evolution is critical for building RAG applications that are not just functional, but genuinely reliable, context-aware, and trustworthy.

The core takeaway is this: the quality of your RAG system's output is a direct reflection of the quality of its retrieval. Default chunking methods create a fragile foundation, leading to a cascade of downstream issues like hallucinated answers, missed context, and poor source attribution. By treating document preparation as a first-class engineering discipline focused on retrieval, you preemptively solve these problems at the source, saving countless hours of difficult downstream debugging.

Recapping the Pillars of Advanced Knowledge Management

Let's distill the most crucial takeaways for improving RAG retrieval:

- Precision Over Proximity: The goal is not just to find nearby information but to retrieve the most relevant and complete conceptual unit. This is achieved through semantic chunking, hierarchical organization, and format-specific strategies that respect the inherent structure of your documents, from complex PDFs to structured tables.

- Context is King: Chunks are not isolated islands of text. Their value for retrieval is magnified by their connections. Deep metadata enrichment, optimized chunk windowing, and clear traceability back to the source provide the essential context that LLMs need to synthesize accurate, nuanced, and verifiable responses. Without this rich layer of metadata, your retrieval system is flying blind.

- Knowledge Management is a Lifecycle, Not a Task: A "set it and forget it" mentality is the fastest path to a stale and underperforming RAG system. The most effective best practices for knowledge management involve a continuous, iterative cycle. This includes robust version control for knowledge assets, automated quality assurance frameworks for retrieval testing, and continuous monitoring of your ingestion pipelines to adapt to new data and evolving requirements.

Your Actionable Next Steps

Mastering RAG retrieval requires a deliberate and structured approach. Begin by auditing your current ingestion pipeline against the practices outlined here. Are you still using a one-size-fits-all recursive text splitter? Are you enriching your chunks with meaningful, schema-based metadata to enable hybrid search?

Start small by selecting a representative subset of your documents and applying one or two of these advanced techniques, such as implementing semantic chunking or a basic traceability map. Establish a baseline for retrieval quality and measure the improvement. This evidence-based approach will build momentum and demonstrate the tangible value of investing in a more sophisticated knowledge management strategy. By systematically elevating your document preparation process, you are not just improving a single component; you are building a resilient, scalable, and intelligent foundation that will define the success of your RAG initiatives.

Ready to move from theory to implementation? The best practices for knowledge management we've covered require powerful, specialized tools. ChunkForge is an enterprise-grade platform built to operationalize these advanced chunking and enrichment strategies, providing the visual tools, quality assurance frameworks, and pipeline controls you need to transform your raw documents into a high-performance RAG knowledge base. Explore how to build a truly retrieval-ready foundation at ChunkForge.